Table of Contents

Share This

Welcome to the A-Z Glossary of SEO Terms & Definitions.

You may use the search bar below to filter the table of SEO terms, then click on the search results to navigate to the definition.

Alternatively, you may download this glossary as a PDF file.

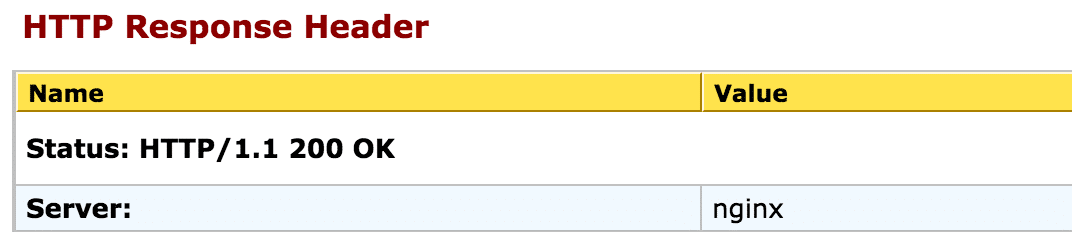

The 200 HTTP status code is used to indicate that the request of a webpage (http://example.com) was successfully received, understood, and accepted.

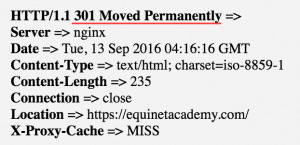

If you have a page that’s permanently changed URLs (e.g. www.example.com/old-page has changed to www.example.com/new-page), you should implement a 301 redirect and relink all previous resources to the new URL.

A 301 HTTP status code indicates that the requested resource has been permanently assigned a new URI (Uniform Resource Indentifier).

301 redirects pass 100% PageRank from the old page to the redirected page, though it was noted otherwise previously.

If you have a page that’s temporarily under going maintenance, you’d implement a 302 redirect. Take note that search engines may not index the redirected URL as a 302 signals temporary redirection. If your page is moving permanently, you should use a 301 instead.

302 redirects pass 100% PageRank as well.

The 307 redirect is the successor of the 302 redirect, albeit many pre-HTTP/1.1 user agents do not understand it. The 307 was introduced to improve certain conditions in which user agents behaved when they received a 302 response.

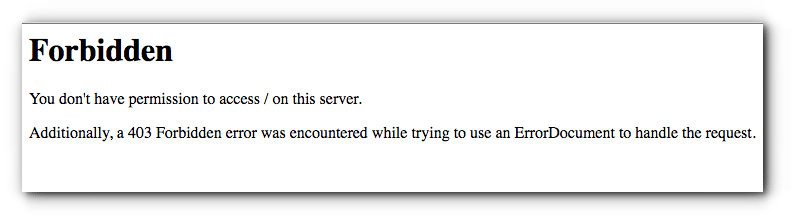

A 403 status code forbids the user from viewing the content on the resource page and indicates why the valid request has not been fulfilled (e.g. due to not having the necessary permissions).

Google will very quickly remove your content from its index when it sees a 403 status code. Many sites have lost a ton of pages in the Google index due improper use of 403s when they should have been using a more temporary status code such as a 503 (Service Unavailable), which doesn’t lead to immediate removal from the index.

The requested resource could not be found and may have either moved or may be available in the future.

Do 404s hurt your site rankings? Not directly at least. Google prefers that your “dead” pages return a proper 404 or 410 response code rather than a “soft 404 ” (an unclear signal for dead pages e.g. returning a response code other than 404 or 410).

Inbound links/external references to 404 pages should also be modified to point to relevant live (200 OK status code) web pages.

For requests for resources that are no longer available permanently and without any known forwarding addresses, a 410 status code is used.

If the server is unsure whether the condition is permanent or temporary, a 404 status code should be used instead.

A 500 status code is a generic error message given when the server encounters unexpected conditions, preventing it from fulfilling the request.

The server is currently unavailable due to a scheduled maintenance or is temporarily overloaded. When Googlebot comes across a 503 status code, they will not immediately deindex the page and will attempt to crawl it again at a later time. However if repeated attempts to crawl the page is not possible due to the 503, the page will eventually be removed from the index.

Having the content is above the fold of the page means the content is immediately displayed without scrolling down further on the page.

Google is against having too many ads or sites that don’t have much content above the fold of the page. Meaning to say if a user has to scroll farther down to avoid the ads in order to see the content, that constitutes to a bad user experience and your rankings will decline (see Top Heavy).

One of the best backlink checker tools in the SEO industry. Ahrefs suite of tools also expand to keyword research and popular content explorer.

An absolute URL takes the full form of the URL e.g. http://www.example.com/resource-page/ and is recommended for proper on-page SEO.

See also: Relative URLs

The AMP Project is an open-source initiative aiming to make the web better for all. The project enables the creation of websites and ads that are consistently fast, beautiful and high-performing across devices and distribution platforms.

AJAX stands for Asynchronous Javascript and XML. It is a client-side script that exchanges data with a server/database without reloading or refreshing the page.

This method of displaying information is quicker and can provide a better user experience, however it can be difficult for Google to crawl content coded with AJAX.

Algorithms are complex computer programs that can perform complex calculations, data processing, and automatically accomplish conditional tasks.

Search engines use algorithms to return your questions with relevant answers. Google’s algorithms rely on over 200 signals including things like keyword mentions, page speed, and page authority in order to accomplish this task automatically on a massive scale.

If you see your rankings drop heavily overnight (like from #3 to #59), you might have received an algorithmic penalty. Check your Google Webmaster Tools Search Console to verify whether you might have also received a manual penalty.

Sites that receive algorithmic penalties typically resolve on their own (regain their rankings) after fixing any issues that may have triggered well known algorithms such as Panda or Penguin.

Google updates their algorithms 500 – 600 times a year on average to combat spam. Once in awhile Google releases public statements when they make major changes to their algorithms. Notable algorithmic updates in the recent years include Panda, Penguin, Hummingbird, Mobilegeddon, and RankBrain.

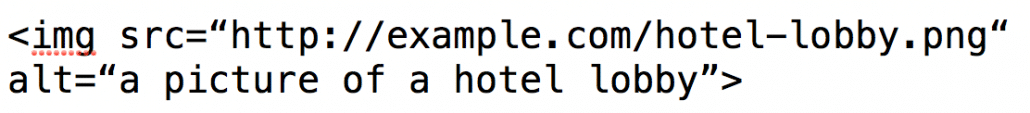

The alternate text or shorthand term “ALT tag” refers to the attribute within an image tag (e.g. alt=”description”). ALT tags should be descriptive of the image to tell search engines and users the nature of the image.

If the image is contextually relevant to your resource, try to include the your target keyword(s) in the ALT tags.

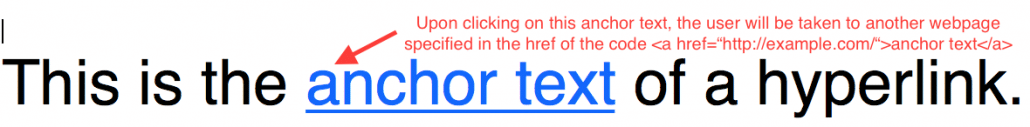

The anchor text is the text within a hyperlink that is clickable. Upon hovering the cursor over and clicking on the anchor text, the user will be taken the destination URL specified in the hyperlink code.

The words in the anchor text can influence the search engine rankings of the page that it links to. Therefore it is good practice to ensure that the anchor text is relevant to the destination page.

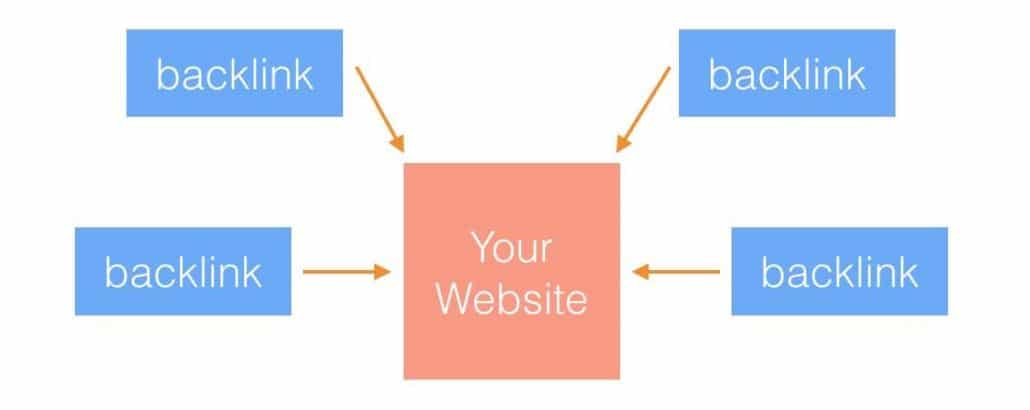

A backlink is any external link from another domain that links to any page on your domain. Backlinks are a major ranking factor in Search Engine Optimization and that means having more backlinks correlate to higher rankings.

Note that there are dofollow and nofollow backlinks. Dofollow links pass PageRank but nofollow links do not.

Backlink checker tools help you analyze your backlink profile to see which websites linked to your website. Here are a few recommended ones:

BackRub was the initial name of the gigantic search engine Google. Here’s the history of Google in depth.

What is Bing? – A heap, especially of metallic ore or of waste from a mine. Source – Google.

Bing is one of the most popular search engines in the world after Google. It is currently owned and operated by Microsoft.

A keyword research tool by Bing to get keyword ideas and data from Bing’s organic and paid search.

A webmaster dashboard by Bing to provide webmasters reporting tools, diagnostic tools, notifications, and a summary view of how their websites are performing on Bing’s organic search results.

Black hat SEO refers to the use of shady practices, automated programs, and aggressive techniques aimed at manipulating search engines to drive a site’s rankings up.

Websites that go against Google’s Webmaster Guidelines risk getting dealt with a manual or algorithmic penalty.

This approach of link analysis sprung from the idea that links from different sections of the page or webpage blocks are weighted differently. In layman terms, a link in the footer may not count as much as a link in the header.

A web log or weblog, more commonly known as a blog, consists of entries/posts/blogposts listed in reverse chronological order (most recent entry appearing first).

Blogposts tend to be written in a more conversational and informal tone than white papers and press releases. It is a great way for SEOs and content marketers to build relationships in similar communities and acquire backlinks.

A great way to build relationships and increase personal branding/brand awareness. Most blogs allow readers to post their comments and include a link back to their own websites.

A black hat SEO technique where “black hatters” aggressively post irrelevant comments that include links (usually with over optimized anchor text) back to their websites in hopes of manipulating search engine ranking signals.

A network of blogs, usually privately owned by an SEO agency, SEO consultant, or in-house, in effort of manipulating search engine rankings through off-page SEO techniques such as pointing exact match anchor text links to a target domain.

In recent years, Google has clamped down on many blog networks, most notably MyBlogGuest, in efforts to deter low quality sites that use deceptive tactics to game the search engine results pages (SERPs).

Also known as search engine spiders, robots, crawlers. These automated software agents crawl content on the web for the purpose of understanding and indexing the content in order to pull the most relevant results to a searcher’s query.

The bounce rate is the percentage of visitors landing on a single page of a website and leaving without viewing any other pages of the same website. There are certain default conditions set by web analytics tools such as Google Analytics and several modifiable conditions that constitute to a bounce such as page inactivity for 30 minutes or more.

High bounce rates, especially when the traffic source is from Google search, also correlate with a decline in rankings. See pogosticking.

Branded keywords are queries that include a brand’s name. For example the search term “amazon web services” is a brand search query of the company Amazon.

Brand keywords take little to no effort to rank highly for, especially for a brand’s official domain. So a newly created website e.g. www.ilovepurses.com will almost always rank #1 for search terms such as “ilovepurses catalogue”.

A brand mention is any mention of a brand name in any web document. It is also a link building strategy known as Link Reclamation, where brand mention tools are used to track and alert a brand of any brand mentions across the web. Following which the PR team can perform an outreach to request the text where the mention occured to be updated with a hyperlink back to the brand’s website.

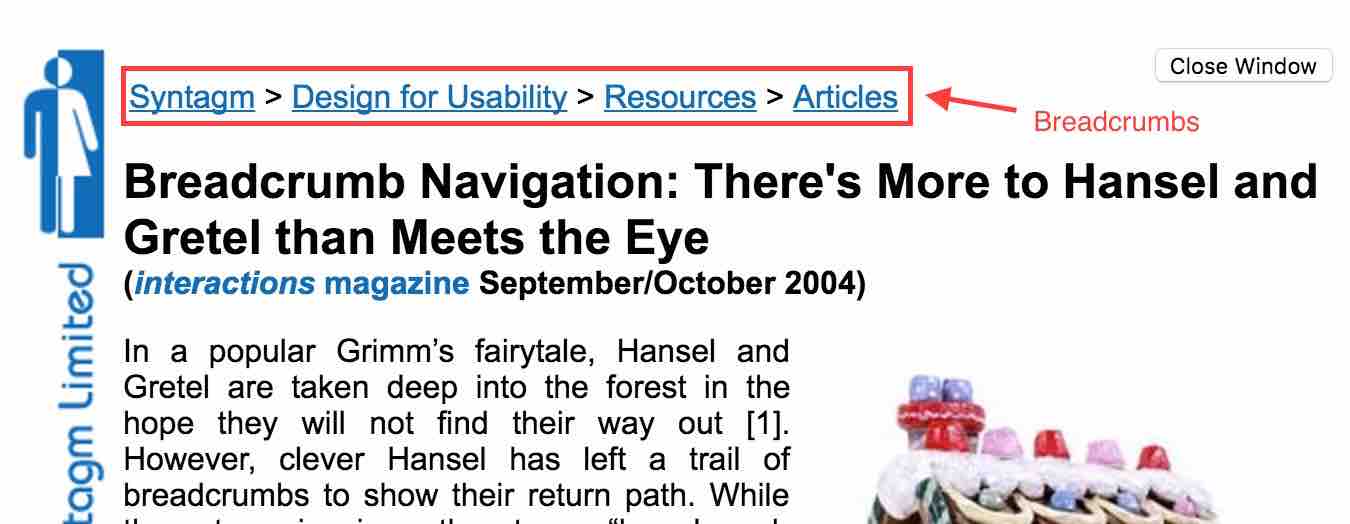

The internet equivalent of Hansel and Gretel’s trail of breadcrumb navigation method. Breadcrumbs are used as a navigational aid for users to keep track of their location when browsing a website.

Any link that leads to a 404 page not found, bad gateway, or internal server error. Link equity is also lost when a hyperlink leads to a 404 page, unless the 404 page redirects to a live URL (200 OK).

This is due to the fact that Google treats 404 pages as non-existent (a black hole) and any link equity that flows to the page ends there.

Further reading:

The rel=canonical tag is used to tell search engines that various URLs have essentially similar content. Search engines will then only index the canonical URL. This takes care of duplicate content issues which confuse search engines as to which content they should rank.

The rel=canonical code is placed on the duplicated pages which you do not want search engines to index. Search engines will then follow the specified URL and index it instead.

Further reading:

A computing term in which data is stored into a hardware or a software (e.g. browser) so that future requests can be served faster.

Page speed is one of the signals Google’s algorithms look at when ranking pages. Leveraging browser caching can enhance the loading speed of your webpages, reduce bounce rates (which may be a negative ranking signal), and allow search engines to crawl more pages on your site.

Two-letter internet top level domains designated for specific countries.

www.example.com.sg

www.example.ca

The letters marked in red are ccTLDs.

Having a ccTLD signals to search engines and users the geographic location in which a site originates. Google uses this signal to rank a local site higher in local search results. This means that with everything else equal, example.com.sg will rank higher than example.com in google.com.sg search results pages.

The ratio of clicks to views from advertisement, email, video, or page links. So if an ad creative had a 1% CTR, it had 100 views and 1 click.

Does CTR influence search rankings? Check out the following articles and be the judge:

A deceptive search engine optimisation technique in which content is presented to search engine spiders is different than that returned to a user’s browser.

Cloaking is a violation of Google’s Webmaster Guidelines.

A co-citation is a similarity measurement technique used to determine whether a subject is similar to another subject and based on whether a third party source referenced both subjects in a single document.

In simple terms, if webpage A and B are cited by webpage C, A and B may be said to be related to each other, even though they don’t directly link to one another.

It is speculated that search engines may be able to assume similarities between page A and B based on the number of co-citations from different third party sources, and rank pages based on those signals.

Co-occurence on the other hand refers to particular phrases mentioned in close proximity to each other.

It is speculated that exact match anchor text links are weakening and if a particular keyword was mentioned in close proximity to an outbound link, it would signal to Google that the citation had contextual relevance to the keyword.

A phrase used to emphasise that correlation does not imply causation. In other words, the mutual relationship between two variables does not necessitate that one causes another.

In SEO, this phrase is commonly used when an SEO activity did not directly influence a page’s rankings, but rather in an indirect way.

For example, pages with high social media share count tend to rank higher on Google than pages with lower social media shares. As social media signals aren’t used by Google as a direct ranking signal, this event is a correlation rather than a causation.

A form of black hat SEO where a page is optimised for ranking, and then swapping another page’s content in its place once it has achieved top rankings.

The currency of online marketing and SEO. Quality content is essential for influence and maintaining SEO rankings.

A network of servers that speedily deliver web content to users based on their geographic locations.

As page speed is a ranking factor, delivering web content at high speed is a good enough reason to get a CDN.

A one-stop destination where web users can find any type of content (guides, ebooks, branded, curated, user generated, faqs) related to a particular topic.

Content marketing is a strategic marketing approach focused on creating and distributing valuable, relevant, and consistent content to attract and retain a clearly-defined audience — and, ultimately, to drive profitable customer action. Source – Content Marketing Institute.

Content marketing and SEO are interrelated but not entirely integrated. SEO comprises of two main parts – On-page SEO and Off-page SEO. On-page SEO is more technical and off-page SEO (the link building part) relies heavily on content marketing.

The uniqueness level of a piece of content. We all know that duplicate content usually doesn’t bode well when it comes to rankings. So what’s the magical percentage?

Unfortunately there isn’t a set percentage for determining the content uniqueness level. Rather, it is the unique value that a content provides that influences its rankings, even if it has duplicated parts in it.

A conversion is an important action that takes place in a customer’s buying journey. It could range from anything from a contact form enquiry, view of a key page, average time spent on a page, to a sale.

The ratio of the number of total visits to the number of conversions in terms of percentage.

A term used when search engine spiders discover and process information of web documents.

The number of pages of a website and the number of times a day a search engine will crawl a website. It is important that all pages of a website that intends to rank on top of Google get discovered and crawled, otherwise they would not be indexed and ranked in Google’s search engine results pages.

The higher the domain authority of a website, the higher crawling priority Google assigns it. Note that there are a myriad of other factors that affect crawl budget such as how often a page is updated (e.g. About Us and Contact Us pages do not update very often and therefore Google does not crawl these pages as often as trending news articles).

In order to conserve a website’s crawl budget, a robots.txt file is used to block sections of a website that have no intention of ranking on the search engine results pages.

Reciprocally linking between two different websites or two separate webpages within the same website. This signals to search engines that the linked content are somewhat related to one another.

Curated content is relevant information on a particular topic that has been gathered and presented in an organised and meaningful way.

CSS = Cascading Style Sheets – A plain text file saved with the extensions .css that is used to style and format web elements in a presentable way.

Refers to the process of a website vanishing from Google’s search results pages.

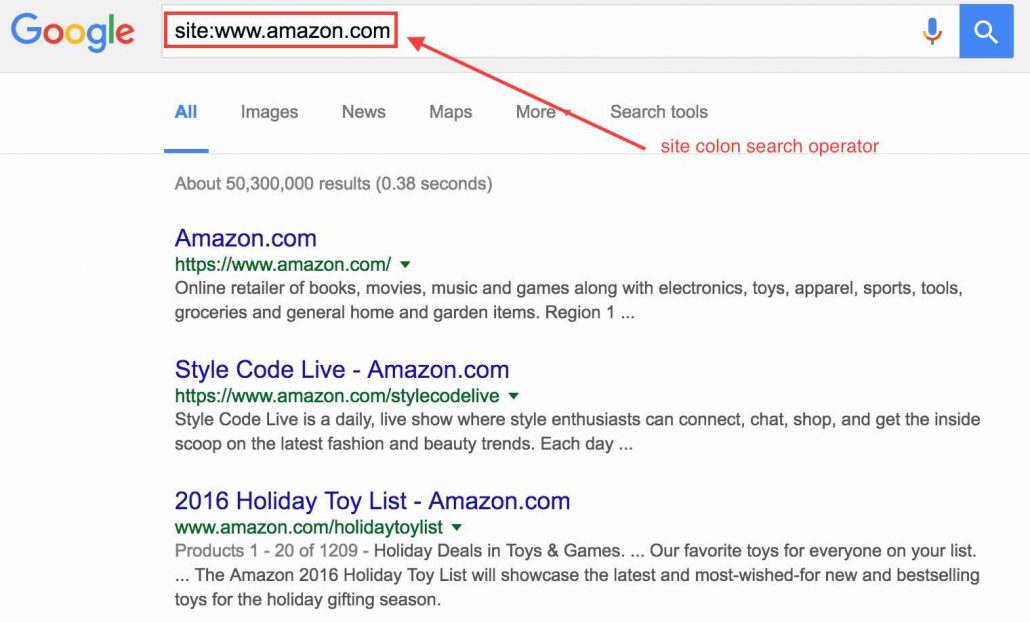

One of the ways to check whether your website has been de-indexed, is to use the search operator site:www.yourwebsite.com and enter it into Google search. If you don’t see any results, unless your website is relatively new (a few weeks old), it is likely you have been de-indexed.

A collection of data and links to websites, webpages, and web documents, organised into respective categories and subcategories. There are free and paid directories, with free directories offering free submission of website listing and paid directories charging for inclusion for a one-time or recurring fee.

Directory links have been receiving flak from Google’s web spam team since the past few years.

A method of dealing with spammy backlinks that were not intentionally acquired by a webmaster.

Google launched the disavow tool in October 2012 to provide an avenue for SEOs to vouch against low quality links that violate Google’s Webmaster Guidelines and prevent a manual penalty.

Google however strongly advises this tool to be used as a last resort, and that SEOs should do their best to take down low quality links manually or through outreach.

A dofollow link is a hyperlink without the rel=”nofollow” attribute. See nofollow links. A dofollow link passes PageRank and other ranking signals (link juice) to the link destination.

A form of cloaking or spamdexing. Doorway pages or websites are created with the objective of ranking highly for specific queries, which may lead a user to multiple similar destinations instead of one useful final destination.

Examples of doorway pages include:

An SEO metric created by SaaS company Moz. Domain authority or DA can be used to predict the ranking ability of a website using a scale of 0 – 100, with 0 being the lowest and 100 being the highest.

Further reading:

What is Domain Authority? – Moz

A domain name e.g. www.example.com represents an Internet Protocol (IP) address e.g. 192.168.1.1 and is mapped to a computer server so that it can be accessed from anywhere in the world on the World Wide Web.

There are different top-level domains such as .com, info, net, org, edu (gTLDs) and country code top-level domains such as .sg, my, ca, us, au (ccTLDs).

Duplicate content is showing similar content on multiple locations (webpages/URLs). This causes problems when search engines don’t know which version to show to users and can result in 1. dropping all versions of the content from the search results pages and 2. ranking the wrong version.

There are many examples and different variations of duplicate content listed in this article.

In layman terms, dwell time is measured starting from when a user clicks on a search result, spends time on the page, and ends when the user goes back to the search results pages.

Why does this metric matter to search engines? Since search engines aim to to serve useful content to their users, a longer dwell time signifies that a content must have been useful enough for a user to spend time digesting it.

Further reading:

Dwell Time: Does This Ranking Factor Really Live Up to the Hype?

Dynamic content, the opposite of static content, serves content that changes based on the user (e.g. browsing behaviour).

Further reading:

Penalisation for personalisation: Google, dynamic content & SEO

Serving different content to different users based on their device type. This is implemented to provide a mobile-friendly experience to mobile users as well as a desktop experience to desktop users, and optimise content for mobile searches.

Another popular method for mobile SEO is responsive design.

Dynamic URLs may change based on the session id which typically looks something like this http://example.com/page?id=21 or includes any of these characters: ? = &. Contrast to static URLs which look like this http://example.com/page.

If you have dynamic parameters, Google recommends that you do not rewrite them into static URLs as this may result in Googlebot crawling the same piece of content needlessly via many different URLs with varying values for session IDs.

Further reading:

Indicators which Google uses to assess page quality. Found and mentioned repeatedly in Google’s Search Quality Rating Guidelines.

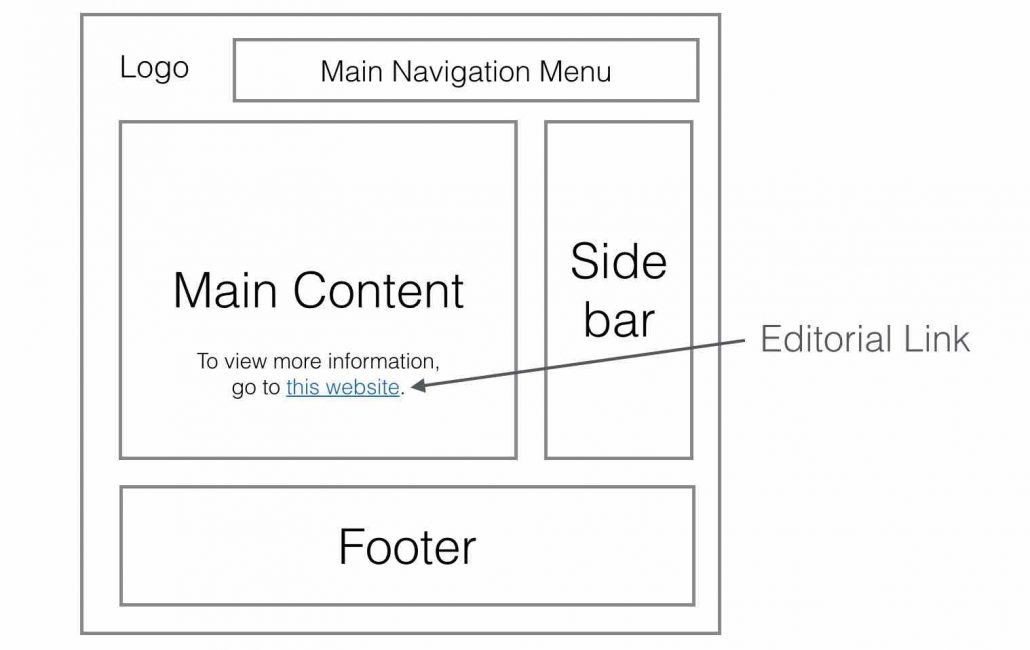

A hyperlink embedded within a page’s main content e.g. a blogpost.

A link building technique used by SEOs to acquire backlinks. An egobait is essentially a link building asset created to stroke the ego of the target linker with the purpose of increasing visibility among influencers and hopefully acquire backlinks in the process.

Further reading:

Ego Bait for Links, Visibility, and Authority

A Guide To Producing Effective Egobait

Google has been moving towards entity search which is based on the knowledge graph. When users search for a particular entity e.g. “mount fuji”, Google displays specific information on this entity on the right side of desktop search results.

Google is able to do that by analysing past searches and user browsing behaviour to determine what information users are looking for, then displays the information within graph panels on the search results pages.

Everflux essentially means constant fluctuation and refers to the way Google updates its index – by continuously crawling the web for new content and integrating it as quickly as possible into a separate index.

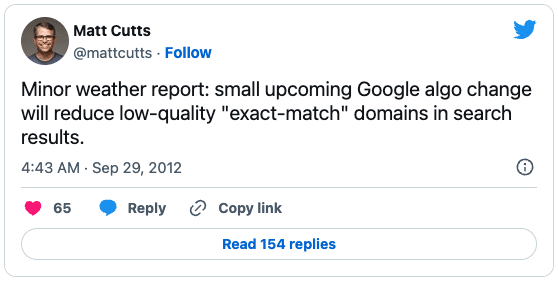

Back in the early days of SEO, exact match domain names (EMDs) ranked very well even without any on-page or off-page optimisation.

An exact match domain refers to a keyword targeted domain. For example if you wanted to rank for the keyword phrase “how to create a server”, you would buy www.howtocreateaserver.com and you would rank on top of Google.

The new EMD algo update in 2012 served to reduce low quality “exact match” domains in search results as quoted by Matt Cutts.

An external link is any hyperlink that points to a destination URL other than its own domain. If your website (e.g. www.yourwebsite.com) links to another website (e.g. www.anotherwebsite.com), the link is known as an external link.

Similarly if another website links to your website, it is also considered an external link.

There are some ongoing debates on whether linking externally to another website will help or hurt your SEO.

A programming language that search engines have difficulty crawling and understanding content built with it.

Using frames on a page is the act of dividing the screen into different windows, displaying content from different URLs in a single view. Not a recommended technique as this may cause search engines to rank the wrong page on their search results.

Further reading:

Why Google doesn’t like frames in your sites

A ranking signal which Google has explored many ways of using to influence the search results, as seen in many patents filed by Google.

Further reading:

10 Illustrations of How Fresh Content May Influence Google Rankings (Updated)

Google Freshness Algorithm Experiment

Google Keyword Planner was previously referred to as Google AdWords Keyword Tool.

Google Alerts is a free tool provided by Google which allows you set up email alerts to monitor the web for interesting content and mentions of your brand, your competitors, and any particular entity.

A practice whereby webpages are optimised to rank highly for irrelevant search terms.

One of the most infamous Google bombs in history was bomb targeting US President George W Bush for the keyword “miserable failure”.

Refers to an algorithmic fluctuation of Google’s search results pages as a result of rebuilding its rankings for a period of time.

Google’s web crawling robot, also known as a “search engine spider”.

A keyword research tool provided by Google. You need a Google AdWords account in order to use this tool.

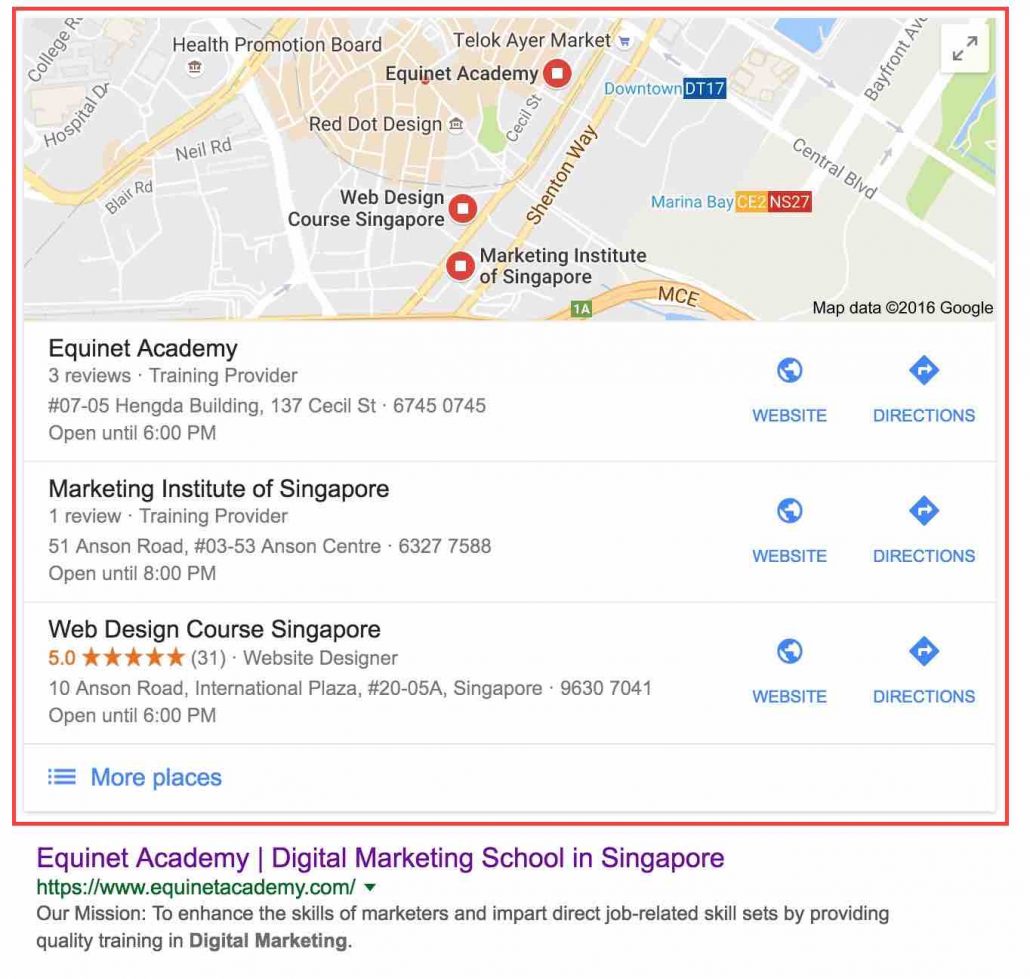

A free Google business listing service that will enable a business to be found on Google Search and Google Maps.

Links shown below some of Google’s search results to help users navigate directly to various sections of a website. At this point in time, sitelinks are automatically analysed and selected by Google’s algorithms, therefore a webmaster won’t be able to control them directly.

Google slap has two references. One for their paid search results and the other for their organic search results.

Receiving a Google Slap on the paid search results means you haven’t been adhering to the Google AdWords guidelines and best practices, resulting in a significant drop in the Quality Score of your target keywords, or an account ban.

For the organic search results, getting a Google Slap means you haven’t been abiding by Google’s Webmaster Quality Guidelines (e.g. building spammy backlinks and keyword stuffing), resulting in a significant drop in keyword rankings or complete removal of your website in Google’s index.

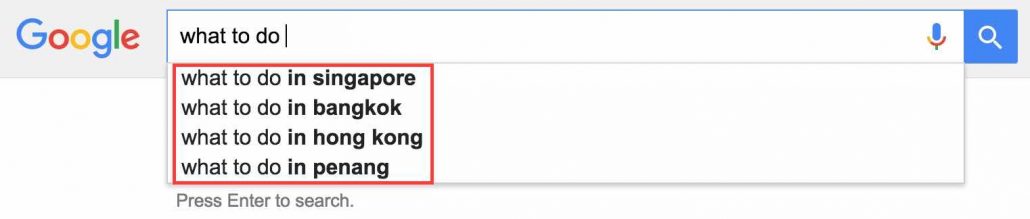

Suggested search terms that show as you type in your search queries on Google.

Contains supplemental results which reside in a secondary database that are deemed less important by Google.

A free tool by Google that allows you to explore trending search topics.

A set of general guidelines, best practices, and principles for webmasters to reference in order to avoid getting penalised by Google.

A free tool for webmasters to track their website’s search performance with Google, get support on site issues, and perform a variety of actions such as fetching Googlebot to crawl a page, submitting a sitemap, and targeting a website to a specific country.

Guestographics is a link building technique in which a link builder posts an infographic on his/her site, reaches out to other bloggers that published similar content, and offers the infographic to visually represent their content, possibly earning links in the process.

A marketing method whereby a blogger contributes an article to another blog. This achieves several things:

However over optimising anchor text and guest blogging purely for links is frowned upon by Google.

As Google moved toward semantic search, focusing on user intent and returning contextually relevant results, Hummingbird represents a newly revamped version of Google’s algorithm.

Interesting fact: Hummingbird is the name of Google’s algorithm.

Further reading:

FAQ: All About The New Google “Hummingbird” Algorithm

How Google Hummingbird Changed the Future of Search

Hyper Text Markup Language or HTML is a set code or markup language used to create structured documents on the World Wide Web.

There are a range of HTML elements such as the

The text before the extension of an image file e.g. (image001.jpg). It is recommended for the image filename to include your target keywords for SEO purposes, provided it is contextually relevant.

A sitemap listing images available on your website which increase the chances of your images getting picked up by Google and displaying on Google Image search results.

You may separate your image sitemap and XML sitemap, or you may choose to include them together.

The image title and caption is the content surrounding the image and provides search engines with information about what the topic and content on the page is about.

The number of times a search result is seen regardless of whether it is clicked or not.

A hyperlink from another website to your website. An inbound link may be “followed” or “nofollowed”. See rel=”nofollow”.

A search engine’s index is similar in concept to a library’s index of books, whereby information is sorted and organised by predefined parameters so that it is easily retrievable when a search is conducted.

Additionally, indexing refers to the process of adding webpages to a search engine’s index.

A page’s indexability refers to how easily or likely that a page can be included into a search engine’s index. It is dependent on a variety of factors such as the coding language used and how unique and authoritative the content is. Pages built with Flash and Javascript risk not getting indexed because search engines may not understand the contents of the page.

An abbreviation of the phrase “information graphic”. An infographic is a graphical representation of information, data, or knowledge and is designed to be visually appealing.

It is also a popular link building technique.

A method of hyperlinking different pages within a site to one another in efforts to signal to search engines the relevancy between the linked pages.

The process of optimising a website to improve its search engine rankings in multiple countries. A few methods include:

Interstitials are ads that appear in a form of a popup, lightbox, or a separate browser window when you are browsing or waiting for a page to load.

Google announced on 10 January 2017 that they will start cracking down on “intrusive interstitials” on the mobile experience.

A numerical statistic that evaluates how important a word in a document is, based on a range of factors such as the weight of the term (or term frequency) in the document.

IP stands for Internet Protocol and the address refers to the unique blocks of numbers (e.g. 192.168.1.1) which uniquely identifies devices on a computer network.

Acquiring many backlinks from unique IP addresses is a strong ranking signal as it signifies the diversity of a website’s backlink profile.

A lightweight programming language that can add dynamic interactivity to your website (for example, clicking on a button to display hidden content).

Further reading:

We Tested How Googlebot Crawls Javascript And Here’s What We Learned

Why All SEOs Should Unblock JavaScript & CSS… And Why Google Cares

jQuery is a fast, small, and feature-rich JavaScript library. It makes things like HTML document traversal and manipulation, event handling, animation, and Ajax much simpler with an easy-to-use API that works across a multitude of browsers. – jQuery.com

Keywords, in SEO context, are words and/or phrases used in webpages to help search engines connect users searching for relevant search terms to your website.

A bad occurrence whereby multiple pages target the same keyword (e.g. duplicate page titles), causing confusion to search engines and negatively affecting search engine rankings.

The process of grouping multiple keywords that are related to a specific topic into a section of a website or a single webpage. This enables you to develop more targeted content to rank for a group of related keywords.

The percentage of a keyword or phrase to the total number of words mentioned in a web document. There isn’t a set rule on the ideal keyword density percentage required to achieve good rankings. However keyword density does shape what search engines think about the overall topic of your content and this can influence your rankings.

Further reading:

Shattering The Myth About The Keyword Density Formula

Keyword difficulty or keyword competition is a measurement of how much effort would be required for a webpage to rank for a particular keyword.

They are a variety of keyword difficulty tools to automatically calculate keyword competition:

The process of including target keywords in specific locations of a web document (for example the title tag, meta description, and URL) to increase search engine rankings.

The position of a webpage on the organic search engine results pages (SERPs).

The process of discovering what keywords your prospects are using and understanding their intent to better optimise your pages for both users and search engines.

An online or offline instrument that enables you to retrieve keyword data and insights.

Suggested keyword research tools:

A black hat SEO technique whereby the same keyword is repeatedly mentioned in a web document with little regard for a meaningful user experience.

An enhancement to Google’s search engine which delivers new information quickly and easily by deeply understanding the meaning of the search query.

Introducing the Knowledge Graph: things, not strings

Google’s Knowledge Graph Explained: How It Influences SEO

In the context of search engine optimisation, a landing page is any page that a user lands on after clicking on a search result.

Latent semantic indexing or LSI is a formula used by search engines to determine whether a page’s content is semantically relevant to a keyword by looking for synonyms of the keyword or words that are related to the subject.

For example home, real estate, property, stamp duty, and property tax, are all LSI keywords.

Any hyperlink (clickable text, button, or image) that takes users to another section (internally or externally) of a website or webpage.

The total time a link exists in a search engine’s index. It also presumed by many SEOs that link age is used as a ranking signal.

The process of acquiring a backlink to increase search engine rankings.

A piece of content (infographic, case study, research paper, blogpost, image) designed with the purpose of attracting content creators to hyperlink to it.

Further reading:

The process of acquiring backlinks through outreach, submission (e.g. to resource pages), and PR.

Further reading:

A 4-Step Link Building Methodology

A significantly rapid increase in the quantity of backlinks pointing to a website, especially new websites.

This may alert the Google manual web spam team to look into the matter, since links typically build up slowly over time.

The act of buying or selling links that pass PageRank. I.e. putting a rel=”nofollow” tag to the hyperlink on a sponsored link is fine.

Buying or selling links goes against Google’s Quality Guidelines – Link Schemes. This extends to non-monetary transactional deals such as exchanging free gifts for links.

Links that block PageRank from flowing to the destination URL. This is done by placing the rel=”nofollow” tag e.g. click here.

Link diversity refers to the assortment of the kinds of links in a website’s link profile. These attributes can be classified into:

Further reading:

20 Attributes that Influence a Link’s Value – Whiteboard Friday

Link authority refers to the amount of value a link passes to the destination page. This includes:

Also known as reciprocal linking. Reciprocal linking is perfectly “legal” if it is naturally acquired. For example if website A links to website B from the footer with the anchor text “visit our sister company”, it is considered natural.

However sending unsolicited emails to a ton of different websites saying you will link to them if they link to you is a form of a link scheme, according to Google Webmaster Quality Guidelines.

A network of websites that link to one another although the content is often unrelated and of low quality. The idea is that this reciprocal linking pattern will improve search engine rankings. However the opposite is true as search engines have developed algorithms to detect this pattern of unnatural linking.

The act of trying to prevent link juice from flowing out to other websites, such as by adding a nofollow tag or not linking out at all.

This is generally a bad idea as Google easily detects this method. On the other hand, some studies conclude that outgoing links play a part in rankings.

Not linking out to other sites also reduces your chances of cultivating link building relationships.

A link building technique of reaching out to someone who has mentioned your brand or referenced your content, but did not link to you, and asking them to link to you.

Further reading:

Link Reclamation Best Practices – The Complete Guide

Link Reclamation: How to Reclaim Links for SEO

Link Reclamation – How to Get the Links You Deserve

A measurement of how relevant a link is to the destination URL. Some clues search engine algorithms look for include:

Further reading:

Link rot refers to any hyperlink on the web that is no longer functioning as it was intended. It may come in the form of broken links (links that lead to 404 pages) or the page in which the link was found was taken down. This is bad for your SEO, as broken links lead to a loss of link value.

Here are some possible causes of link rot:

Further reading:

SEO: Avoiding link rot with your aging website

Any form of link building which goes against Google’s Webmaster Quality Guidelines – Link Schemes. Some examples of link spam include:

Throughout the history of SEO, Google has been constantly cracking down on link spam (spammy link building activities).

Some of Google’s most notable link spam algorithms:

Also known as “anchor text”. The clickable text of a hyperlink.

The rate (how fast or how slow) in which links are acquired within a span of time.

A steep rise in acquired backlinks in a short amount of time can be a red flag to search engines for suspected unnatural link building techniques. On the other hand, a slowdown on link acquisition can signal to search engines that the web is losing interest in the domain and affect its domain authority.

A term describing website owners and content creators who link out to other websites, coined by Rand Fishkin.

Where your company’s Name, Address, Phone number (NAP) is mentioned on other websites such as directory listings.

This helps to rank your Google My Business listing page better on the local search results.

The method of optimising a local business to rank on the local search results. This includes building local citations, earning Google My Business reviews, and building the website’s Page Authority.

Further reading:

Local Search Ranking Factors – Moz

Keywords phrases that are longer and more specific to the searcher’s intent. The reverse would be short tail keywords which are more generic in nature.

Examples of short tail keywords in the real estate niche include:

Examples of long tail keywords in the real estate niche include:

All content on a webpage can be classified as either Main Content (MC) or Supplementary Content (SC).

The MC is any part of the webpage that directly helps the page to achieve its main purpose. For example, the MC of a currency converter tool page would be the currency converter tool itself. It should be displayed above the fold and in a prominent location of the page.

Further reading:

Official Google Search Quality Rater Guidelines

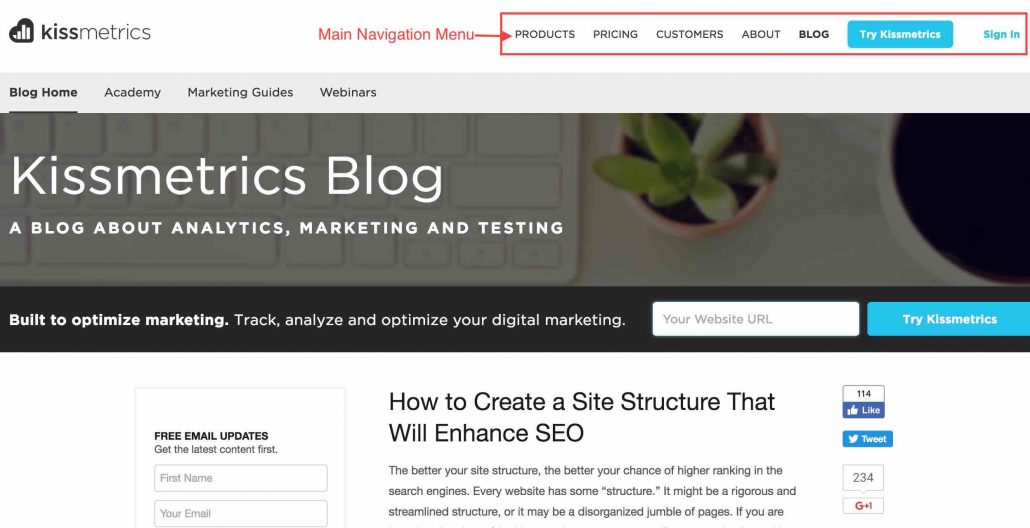

Contains links to internal pages of a website, usually located in the header section.

Further reading:

Mega Menus & SEO – Search Engine Land

A manual action whereby a human engineer from Google or Bing has manually reviewed a site flagged for spammy activities relating to Search Engine Optimisation and purposefully prevents the site from ranking highly.

Further reading:

Fighting Spam – Inside Search – Google

A HTML attribute that is used to provide search engines with a summarised description on the contents of your webpage. It is commonly used by search engines to display as the preview snippet of the search engine results pages.

Note: Meta description isn’t a ranking factor, however it may increase the click-through-rate of your search results, resulting in increased website traffic.

A HTML attribute that lists the keywords that are found on the webpage. It is no longer used as a ranking factor by major search engines. In fact, spamming this meta tag can have adverse effects on your rankings.

Further reading:

Is the Meta Keyword Tag Still Used by Google, Bing, And Yahoo? – Chris Edwards

A method of getting a web browser to refresh a page after a give time interval e.g. after 5 seconds. It can also be used to instruct the web browser to redirect the user to a different URL upon refreshing the page.

Here’s how a meta refresh tag looks like:

Google recognises meta refresh as a page redirect and allows PageRank to flow from the current page to the redirected page. However it is more recommended to use 301 permanent redirects if you the URL of the page has permanently changed.

A HTML attribute that instructs search engines to obey a set of rules such as not indexing a page and not following any links on the page.

<meta name=”robots” content=”noindex,nofollow” />

Further reading:

The Ultimate Guide to the Meta Robots Tag – Yoast

A mirror site is a replica of another existing website. It is commonly used for the following reasons:

A Google ranking algorithm update that launched on 21 April 2015. It ranks webpages on mobile search results based on how mobile-friendly (readable text size, touch elements well spaced out, etc) they are.

Further reading:

Mobile-first indexing – Google Webmaster Central Blog

Describes how accessible a webpage is to users who are browsing the page on a mobile device. For example:

The best way to check whether a webpage is mobile friendly is via the Mobile-Friendly Test Tool provided for free by Google.

Further reading:

MozRank represents how important a webpage is by calculating the number and quality of webpages that link to it (link popularity). It is originated from Moz.

Further reading:

The method of optimising SEO tags and contents on a webpage (images and text) for a variety of keywords.

For example the title tag of a page could include more than one variation of the keyword:

Where meeting and conference are synonyms but are both included to optimise the title for multiple keywords.

In SEO context, NLP or Natural Language Processing is the ability of a computer program to interpret human language and understand the meaning behind a query, and then be able to return relevant answers.

Post rollout of Google’s Hummingbird and RankBrain algorithm update as well as the Knowledge Graph has seen significant improvements in terms of Natural Language Processing.

Backlinks that are acquired through non-spammy techniques such as one to one outreach, quality guest blogging, and submission of webpages to high quality web resources.

A deliberate act of spamming black hat links to a competitor’s website with the aim of sabotaging its rankings through a search engine penalty.

Further reading:

A Startling Case Study of Manual Penalties and Negative SEO

A creative link building technique whereby SEOs ride on a trending news story and contribute their own piece of the story, in efforts to drive links back their content. This usually involves a lot of participation in popular social media channels.

Groups of keywords and keyword phrases that contain keywords related to a particular niche.

A HTML attribute that can be added into the code behind hyperlinks to tell search engines not to pass PageRank to the destination URL.

This link does not pass PageRank

This is generally done when webmasters don’t fully trust a source that they are linking to, thus preventing PageRank to flow to the given source.

A HTML attribute to tell search engines not to index a particular webpage.

<meta name=”robots” content=”noindex“/>

Since 2011, Google started blocking webmasters from viewing what search queries their website visitors keyed into Google Search and clicked on their search result. Thus

Refers to external website related activities (mainly link building and content marketing) in order to boost a website’s search engine ranking positions.

Extends to Local SEO where local citation building and link building play a big part in ranking Google My Business pages on the local search results.

Refers to internal website activities such as keyword, site speed, mobile, and content optimisation in order a boost a website’s search engine ranking positions.

Online Reputation Management refers to the practice of monitoring and attempting to positively influence the public perception of a brand or a person on the web.

Some strategies include addressing negative comments directly on social media platforms (forums, Facebook, Twitter) and performing search engine optimisation on brand search terms to ensure the first page of the SERPs do not contain any negative results.

The non-paid section of a search engine results page, usually below the paid ads. Ad fees will be charged to the advertiser when a user clicks on a paid ad result. Whereas no fees are charged when a user clicks on an organic search result.

A ranking algorithm created by Google co-founders Larry Page and Sergey Brin to calculate the importance, authority, and reliability of a web page from a scale of 0 – 10, with 0 being the lowest and 10 being the highest.

No one explains this better than Moz, the creator of Page Authority:

Page Authority is a score (on a 100-point scale) developed by Moz that predicts how well a specific page will rank on search engines. It is based off data from the Mozscape web index and includes link counts, MozRank, MozTrust, and dozens of other factors.

The amount of time it takes for a webpage to load.

Page loading time is a Google ranking factor which may affect your rankings if it loads too slowly. Google recommends your pages load in 4 seconds or less.

If your site is loading slowly, you may check for any issues with this tool: PageSpeed Insights

Some common issues include:

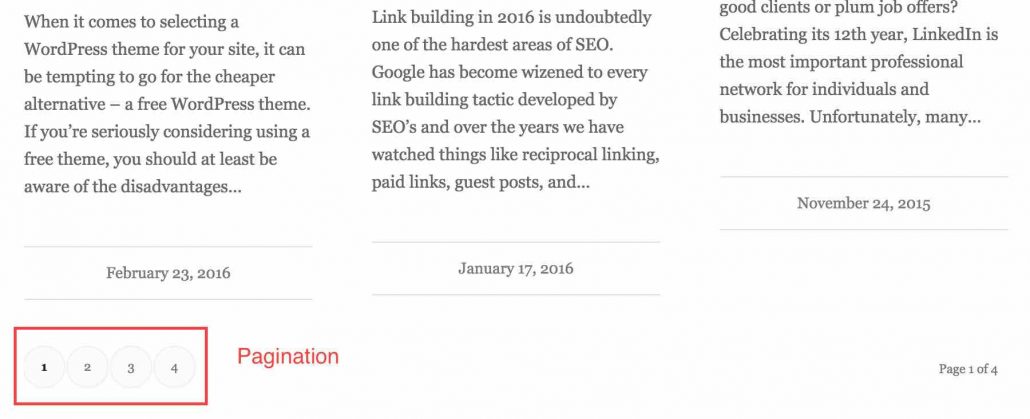

The process of segmenting content onto multiple pages and including paginated links to those pages. Here’s an example of pagination:

Google recommends that you indicate paginated content as this will help them index your content better on their search engine results pages.

Further reading:

Pagination: Best Practices for SEO & User Experience

Paid inclusion refers to the act of transacting in order to acquire a link back to one’s website. In other words, buying links.

This goes against Google’s Quality Guidelines and may result in a manual penalty.

This refers to the search results located above the organic search results, controlled by Google AdWords.

A Google algorithm focusing on penalising websites with low quality on-page content. As of 2016, the Panda algorithm is now part of Google’s Core ranking signals.

A Google algorithm focusing on penalising websites with spammy backlink profiles. As of 2016, the Penguin algorithm now runs in real time within the core search algorithm.

A Google algorithm aimed at providing more accurate and relevant local search results.

Refers to the act of clicking on a search result and clicking back to the search engine results pages because they did not find what they were initially searching for.

This is often discussed to be a bad ranking signal as it is one of the factors that show a page did not serve its intended purpose.

A publicity technique usually involving a compelling news story that is distributed to news sites.

This used to be a very popular link building technique until Google indicated that optimised anchor text links in press releases fall under unnatural links that violate their guidelines.

The primary keywords are high priority keywords that are included in more prominent areas within a page such as the title tag, when optimising a webpage for rankings.

A PBN is a network of sites usually created and maintained by an SEO or SEO agency. It is then used to manipulate the rankings of targeted websites by creating keyword-optimised anchor text links to them.

Google has shut down many PBNs and are consistently issuing manual penalties that devalue any outgoing links from websites that link unnaturally to other websites.

Further reading:

Google Warning: Get A Free Product To Review, Nofollow That Link & Disclose

An important local ranking signal used by Google to determine the local ranking position of a business based on its proximity to the searcher.

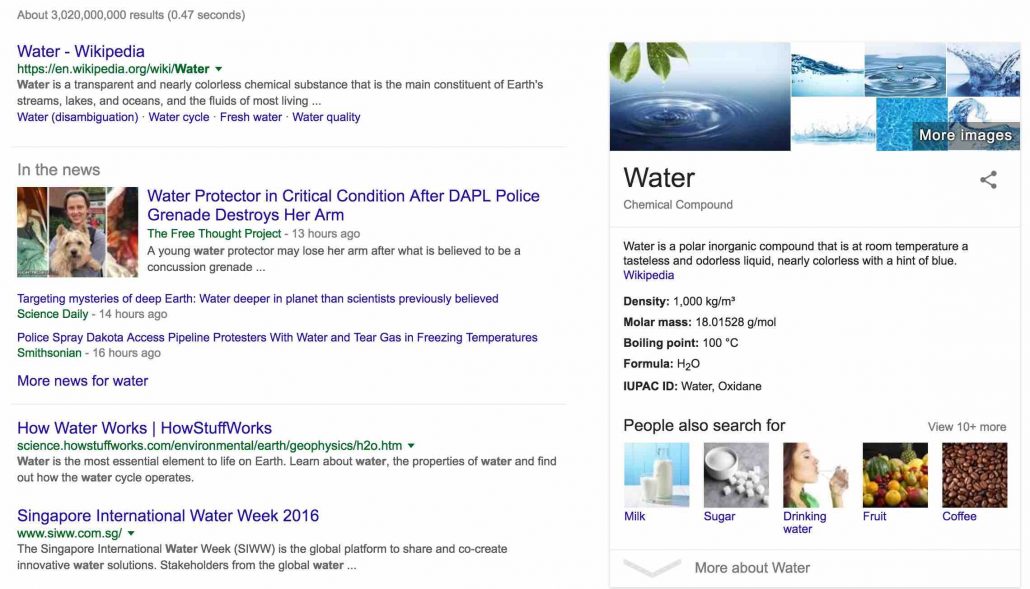

A ranking system used by Google to return diverse search results to search queries, particularly generic queries, branded search terms, and news search terms.

Take this example search query “water” and see what Google returns.

Google returns the definition of water in the first result, some of the latest news on water, how water works, and an organisation dealing with water solutions.

A “freshness” algorithm created by Google to return fresh results for search queries revolving around a trending topic.

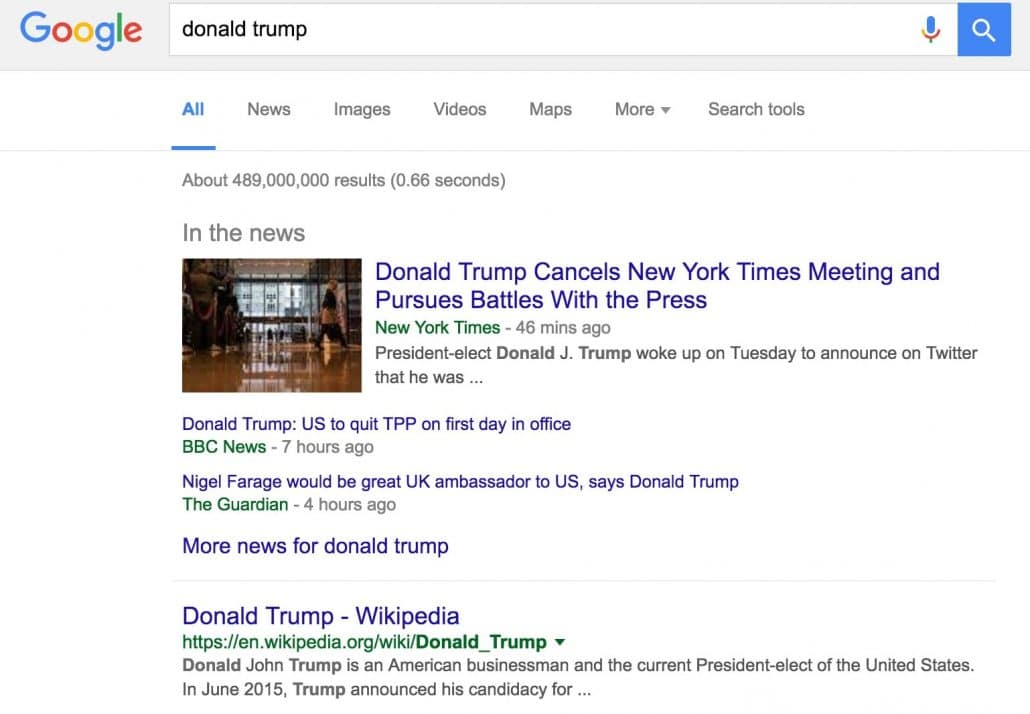

In the 2016 U.S. elections, the term Donald Trump spiked to a peak search volume of 30 million worldwide.

The key to appear on the fresh results would be to publish breaking news stories as soon as possible.

Keywords or keyword phrases entered into a search engine with the intention of getting relevant results.

Query refinement refers to the process of correcting or refining a search query to return more relevant results. Query refinement can be done by either the search engine or the user.

In the case of query refinement by a search engine, the search engine will suggest related search terms, correct spelling errors, and attempt to automatically complete your search query as you are typing it out.

RankBrain is a machine learning algorithm created by Google to help process and sort through search queries and return more useful and relevant results.

Further reading:

FAQ: All about the Google RankBrain algorithm

The ranking positions of a website’s target search queries, also commonly referred to as keyword rankings.

A software that allows you to check your search engine ranking positions for selected search phrases and keywords.

Here are some free rank checker tools:

Reciprocal linking refers to two or more different websites linking to one another.

In general, natural reciprocal linking is fine, such as if Website A links to a content resource on Website B, and Website B links to Website A’s blogpost. However the links must not be manipulated i.e. over optimised anchor text.

What isn’t acceptable is “Excessive link exchanges (“Link to me and I’ll link to you”) or partner pages exclusively for the sake of cross-linking” – as stated in Google’s Link Schemes.

A submission-based appeal to Google or Bing to lift a manual penalty as a result of spamdexing a website (failing to comply to Google Webmaster Guidelines and Bing Webmaster Guidelines).

Further reading:

Submitting a reconsideration request (Google)

The instance of sending users and search engines to a different URL than the one initially requested.

There are various types of redirects such as:

Refers to the referral traffic sent from other websites to yours typically through hyperlinks. The more quality backlinks earned, the more referral traffic from these sources.

A HTML tag used to markup pages that are similar in context but targeted to different countries and/or languages.

For example if you have a Japanese version on www.yoursite.com/jp and an English version on www.yoursite.com/en you would put the following markup on both the Japanese and English versions:

This will signal to Google that there are two geo-targeted versions of your website and help them to show the correct version on both google.co.jp and google.com.

Further reading:

Hreflang: The Ultimate Guide – Yoast

Use hreflang for language and regional URLs – Google

A HTML attribute attached to the code behind the hyperlink to signal to search engines that page does not endorse the destination URL.

Also commonly referred to as “nofollow links”, these links do not pass PageRank or link juice.

Relative URLs are much shorter than absolute URLs, displaying only the path e.g. /resource-page and omitting all other details such as the protocol e.g. https:// or domain name e.g. example.com.

This may cause on-page SEO problems such as:

Further reading:

Why Relative URLs Should be Forbidden for Web Developers – Yoast

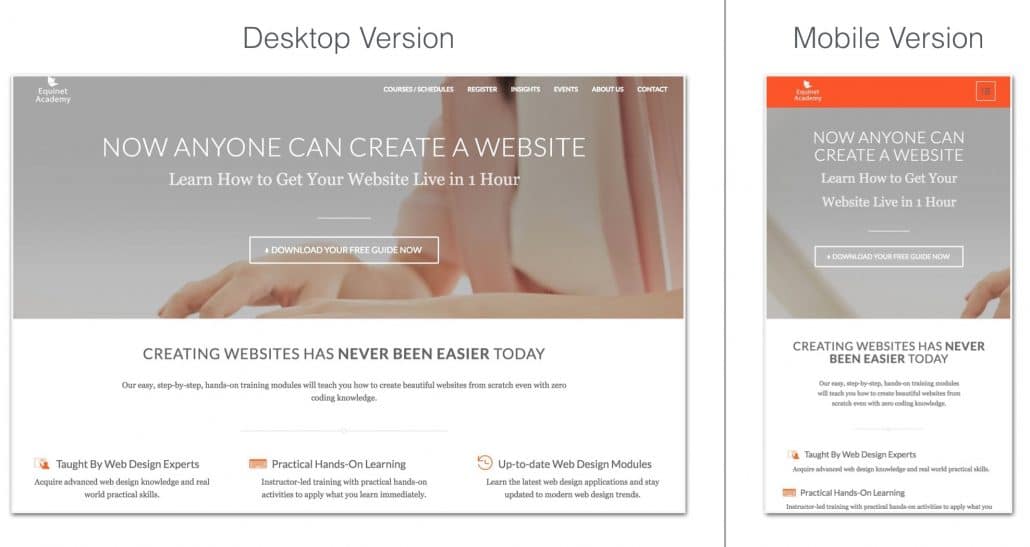

Used to describe how flexible or fluid the contents of a webpage are when it is requested from various devices of various screen sizes such as mobile phones, smart phones, and tablets. Such as the following example:

To be on the safe side, you may want to check whether your responsive webpages pass Google’s Mobile Friendly Test tool.

Further reading:

Make sure your site’s ready for mobile-friendly Google search results

Reverse engineering is an SEO link building technique by which you run your competitor’s domain through a backlink checker tool like Ahrefs and analyse their backlink profile.

Once you’ve identified websites (who’ve linked to your competitors) that may also potentially link to you, you’ll go out there and get links from these websites or similar-themed websites.

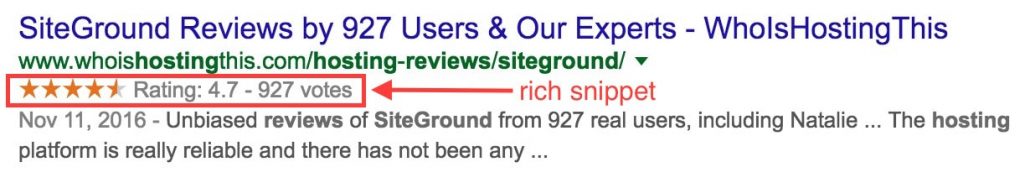

Rich snippets or structured data markup are a type of microdata markup (using the Schema.org vocabulary) that are added to webpages, with the purpose of making the webpage result on the search engine results pages more visually outstanding, and also display information related the query.

Further reading:

Introduction to Structured Data

Robots, also referred to as search engine spiders, are computer robots programmed by search engine engineers to crawl webpages and process information for data storage and retrieval purposes.

A text file that website owners use to provide instructions to search engine spiders about which files and directories to ignore when crawling a website. The robots.txt file is stored in the root of the domain. Here’s an example of what it looks like:

User-agent: *

Disallow: /members-area

Where * signifies all search engine robots that are programmed to obey a robots.txt file, and Disallow: /members-area tells search engine robots not to crawl the member section of the website.

This conserves the crawl budget of a website by blocking access to unimportant directories, thus allowing more important sections of the website to be crawled and processed.

A curation of the best content on a related topic or industry in a blogpost. Also used as a link building technique by SEOs.

RSS feed stands for Really Simple Syndication or Rich Site Summary. It is an XML-format file in which web authors can update the feed list by adding new stories/blogposts and readers can access this list by subscribing to it.

Schema.org is a set of vocabulary types, properties, and enumeration values that can be used with a variety of encodings including RDFa, Microdata and JSON-LD to markup webpages, emails, and many other applications.

When used on webpages, it can create rich snippets that display marked-up data on the search engine results pages.

Scraped content is content that is copied and/or slightly modified from other sources and published on your own site.

In general, this is a negative term used to describe stolen content and goes against Google’s Quality Guidelines – Scraped Content.

Previously known as Google Webmaster Tools, Google Search Console is a free web service help website owners monitor their site health and maintain their search presence on Google.

Webmasters can submit their websites to Google Search Console and verify their ownership. Once verified, webmasters can perform a range of actions including:

A program designed to allow users to search for and retrieve information on the World Wide Web.

Popular search engines worldwide include Google, Bing and Yahoo!, Baidu, Yandex, and Ask.com.

SERPs are pages displaying links to webpages in response to a query entered by a search engine user. It contains two main types of results – Paid search results (ads) that advertisers have to pay search engines for every click, and organic search results which are ranked by algorithms based on dozens of ranking factors.

A summarised version of a webpage that is displayed on the search engine results pages. This snippet can be customised by editing the meta description of the page.

A form of punishment dealt by search engines to websites that implement spammy web activities in effort to manipulate their search engine rankings.

This may come in one of two forms – An algorithmic penalty (punishment dealt by search engine algorithms) or a manual penalty (a manual action dealt by a human search engineer).

The process of submitting a website to the search engines and one of the ways to get into a search engine’s index.

Note: Submitting a website/webpage to a search engine does not guarantee indexation. Some reasons a website/webpage may not get indexed are, duplicate content and thin/shallow content.

A collection of search queries recently performed by a search engine user. Based on a user’s search history, search engines may tailor search results to individual users, thus the results may appear different from user to user.

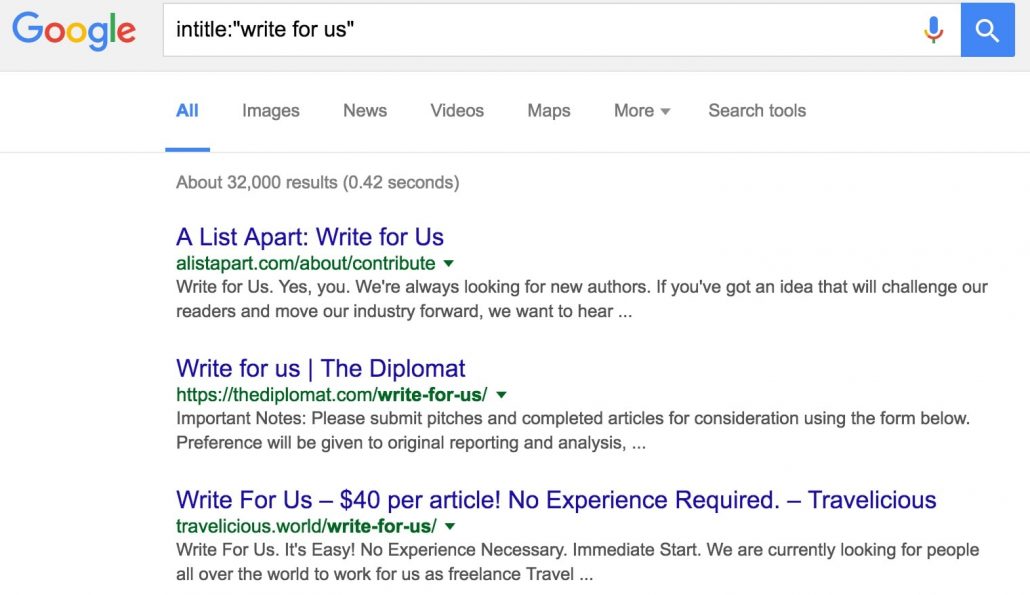

A set of vocabulary that can be added to search queries in order to filter the search results.

Here’s an example of a search operator that searches only for keywords found in a page title:

intitle:”write for us”

Another example of a search operator that filters results found only on a particular website:

site:www.example.com

A keyword or phrase entered into a search engine for the purpose of retrieving information (search results) related to the search query.

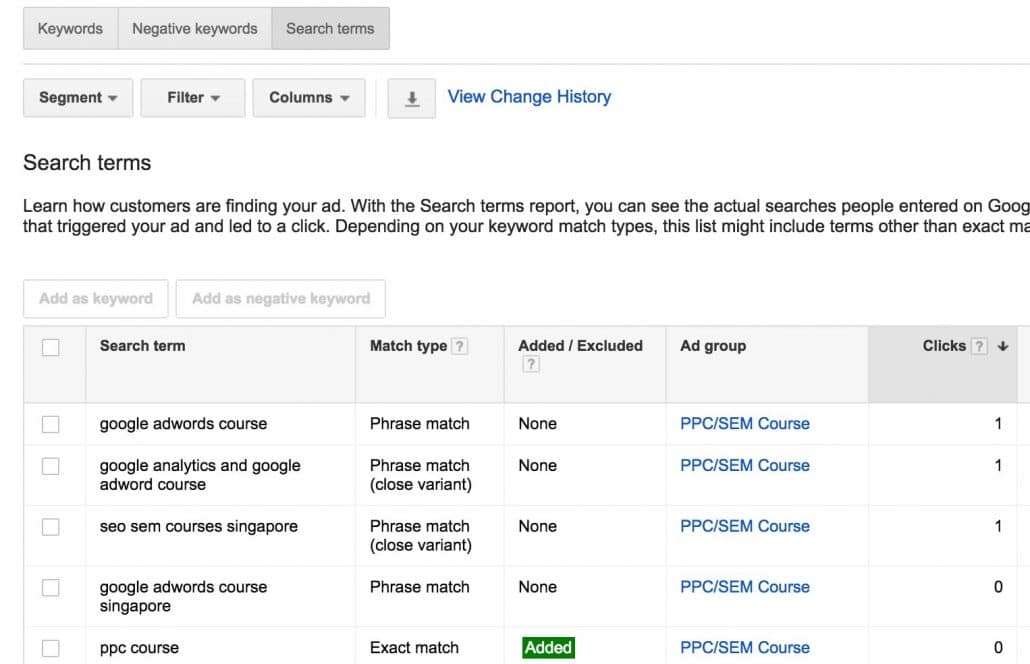

A report generated by Google AdWords that displays the exact keywords a user searched for and clicked on a paid ad result.

Here’s a sample of a search terms report:

The specific number of searches performed for a particular keyword or keyword phrase. Search volumes for specific keywords can be research through keyword research tools such as:

Keywords that are of a lesser priority than primary keywords that need to be optimised on a website or webpage. Keywords of a higher priority go in the